Simple basic metrics to measure the AI model performance

What is Accuracy, Recall, Precision, TP, FP, TN, TP ?

Today, I am going to explain basic metrics used to measure the AI Model. I wanted to keep it very short blog on this topic, after understanding the metrics hard way1. Since, I am writing this during COVID time, consider an example, where I build an AI Model which classifies a person report as COVID or not.

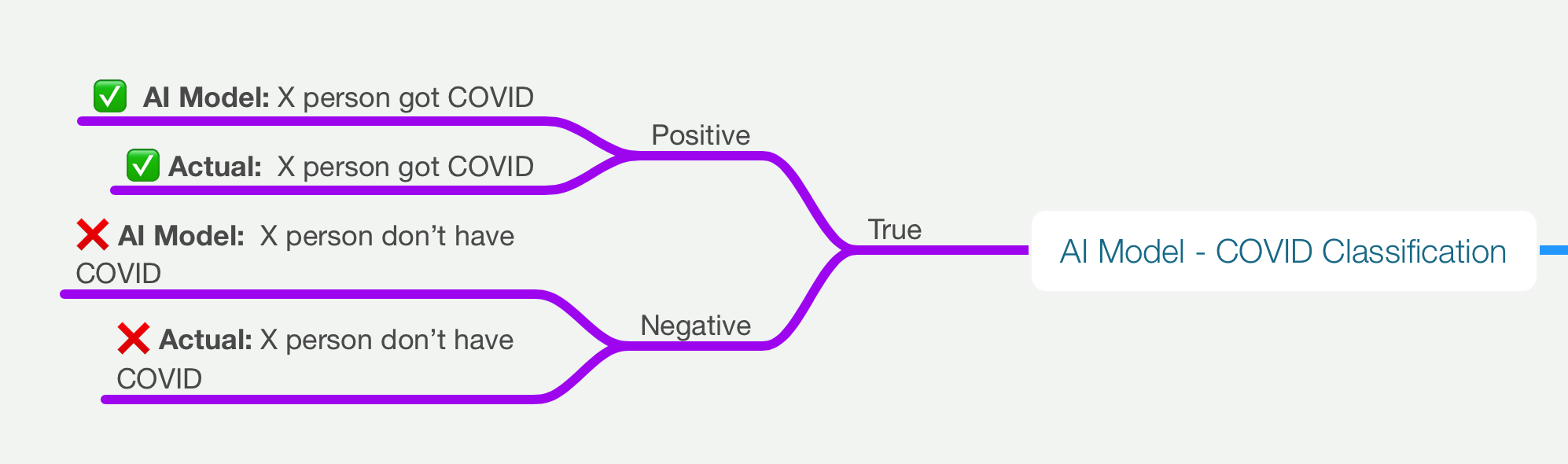

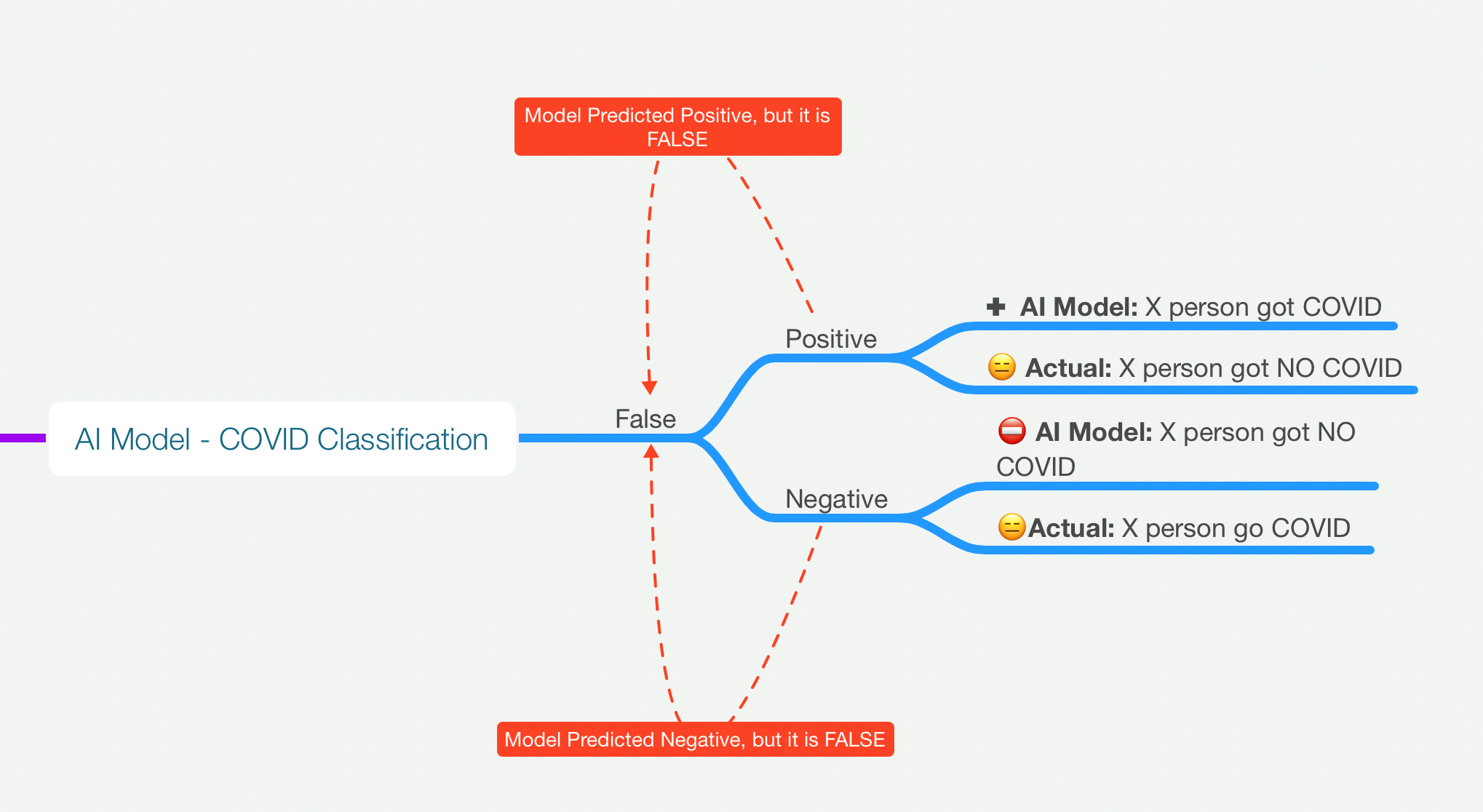

Terms - TP, TN, FP, FN

True Positive and True Negative

False Positive and False Negative

In order to avoid confusion, read from right-left, for example - FALSE-POSITIVE, meaning model predicted the output as positive but it is not right/false.

Metrics

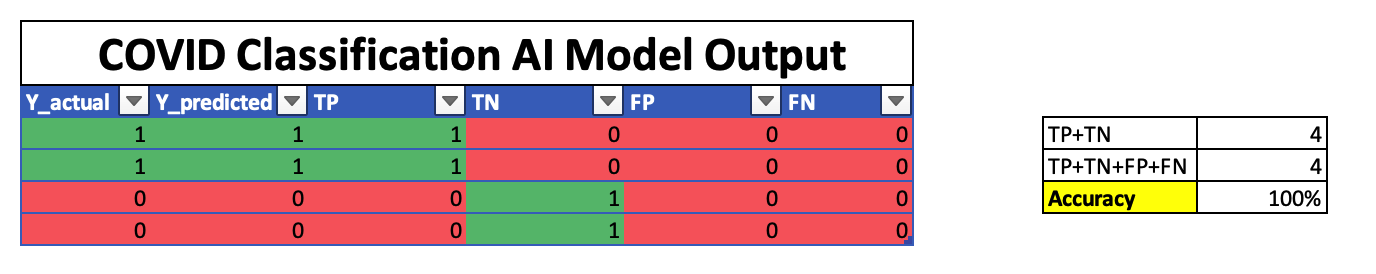

Accuracy

If it is 100% accurate model, the model predicts actual as actual, something like below. In practical world, if the model is 100% accurate means, it is overfitting the model to data, and it needs to be revised.

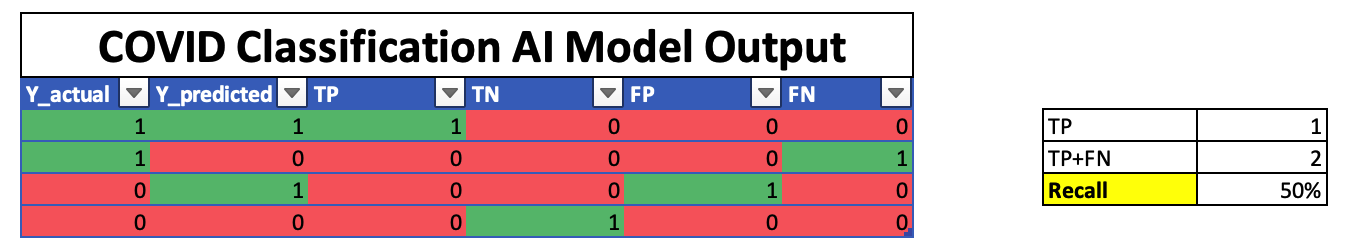

Recall

What proportion of actual positives was identified correctly ? 1

Below table got 50% recall, meaning 50% of the time if Model predicts COVID, then 50% of the time it is actual.

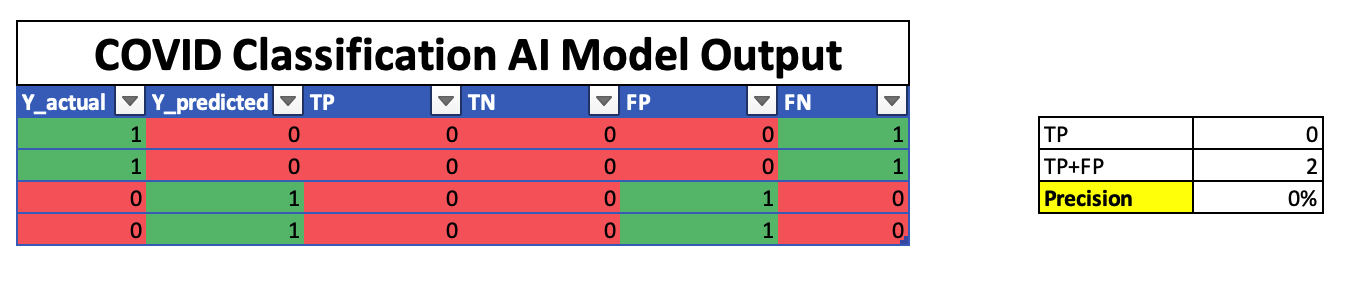

Precision

What proportion of positive identifications was actually correct ? 1

In below example, precision is 0%, meaning predicted positive output is not right, the Model says - “person doesn’t have covid, but in reality person got covid”